by Frederike Kaltheuner

When the former British deputy prime minister Nick Clegg joined Facebook in 2018, the company was immersed in a number of scandals. Cambridge Analytica had been harvesting personal data from Facebook profiles. UN human rights experts said the platform had played a role in facilitating the ethnic cleansing of the Rohingya in Myanmar. Its policies during the 2016 US presidential election had come under fire. Now Clegg has taken a top role as the company’s president of global affairs. Will he be able to tackle the seemingly endless problems with the way that Facebook – which recently rebranded as Meta – works?

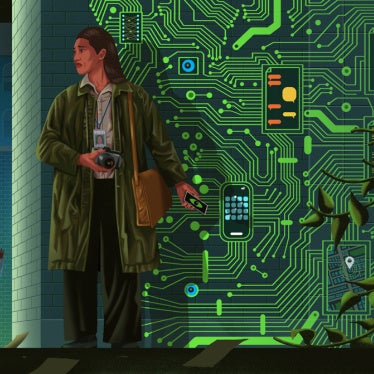

For better or worse, Meta and Google have become the infrastructure of the digital public sphere. On Facebook, people access the news, social movements grow, human rights abuses are documented and politicians engage with constituents. Herein lies the problem. No single company should hold this much power over the global public sphere.

Meta’s business model is built on pervasive surveillance, which is fundamentally incompatible with human rights. Tracking and profiling users intrudes on their privacy and feeds algorithms that promote and amplify divisive, sensationalist content. Studies show that such content earns more engagement and in turn reaps greater profits. The harms and risks that Facebook poses are unevenly distributed: online harassment can happen to anyone, but research shows that it disproportionately affects people who are marginalised because of their gender, race, ethnicity, religion or identity. And while disinformation is a global phenomenon, the effects are particularly severe in fragile democracies.

Despite his new title, Clegg alone won’t be able to fix these problems. But there are several things he should do to protect the human rights of its users. To begin with, he should listen to human rights activists. For years, they have recommended that Facebook conduct human rights due diligence before expanding into new countries, introducing new products or making changes to its services. They have also recommended the company invest more in moderating content to effectively respond to human rights risks wherever people use its platforms.

The likelihood of online speech causing harm, as it did in Myanmar, is inextricably linked to the inequality and discrimination that exists in a society. Meta needs to invest significantly in local expertise that can shed light on these problems. Over the past decade, Facebook has rushed to capture markets without fully understanding the societies and political environments in which it operates. It has targeted countries in Africa, Asia and Latin America, promoting a Facebook-centric version of the internet. It has entered into partnerships with telecommunications companies to provide free access to Facebook and a limited number of approved websites. It has bought up competitors such as WhatsApp and Instagram. This strategy has had devastating consequences, allowing Facebook to become the dominant player in information ecosystems.

It’s also essential for Meta to be more consistent, transparent and accountable in how it moderates content. Here, there is precedent: the Santa Clara principles on transparency and accountability in content moderation, developed by civil society and endorsed (though not implemented) by Facebook, lay out standards to guide these efforts. Among other things, they call for understandable rules and policies, which should be accessible to people around the world in the languages they speak, giving them the ability to meaningfully appeal decisions to remove or leave up content.

Meta should also be more transparent about the algorithms that shape what people see on its sites. The company must address the role that algorithms play in directing users toward harmful misinformation, and give users more agency to shape their online experiences. Facebook’s Xcheck system has exempted celebrities, politicians and other high-profile users from the rules that apply to normal users. Instead of making different rules for powerful actors, social media platforms should prioritise the rights of ordinary people – particularly the most vulnerable among us.

As Meta is trying to become the “metaverse”, these problems will only become more apparent. Digital environments that rely on extended reality (XR) technologies, such as virtual and augmented reality, are still at an early stage of development. But already there are signs that many of the same issues will apply in the metaverse. Virtual reality glasses can collect and harvest user data, and some VR users have already reported a prevalence of online harassment and abuse in these settings.

So far, Meta hasn’t put its users’ rights at the centre of its business model. To do so would mean reckoning with its surveillance methods and radically increasing the resources it puts towards respecting the rights of its users globally. Rather than rebranding and pivoting to XR, where the potential for harm stands to grow exponentially, Meta should press pause and redirect its attention to tackling the very tangible problems it is creating in our present reality. The time to address this is now.