Does privacy depend on secrecy? The question seems obvious until you think about it. Yes, much of the privacy we value takes place away from prying eyes, but not all of it. We also depend on a degree of privacy even in public. But increasingly invasive digital surveillance combined with artificial intelligence has put that "public" privacy in jeopardy.

I live in New York City. When I walk the streets of Manhattan, anyone could follow me and watch what I am doing. But in-person surveillance is time-consuming and expensive. As a former federal prosecutor, I used to oversee such surveillance. It involved teams of agents, rotated to avoid detection, with sometimes round-the-clock shifts. A government could amass such resources for only the gravest threats.

Most people know that when it comes to their daily lives, no one will bother. It's just not worth it. So when we see people or visit places that we'd like to keep private, we know for the most part we can.

But that is changing. Most of us keep our mobile phones with us, and they provide a real-time digital record of our movements that the phone or internet company can access. Even if we leave our phone at home, ubiquitous and cheap video cameras increasingly track our movements. As facial-recognition software gains sophistication, the government will be able to follow us anyway.

Artificial intelligence, in turn, has magnified the scale and invasiveness of public surveillance. Facial recognition software empowered by it enables governments and potentially others to identify us with increasing accuracy as we walk the streets. We can then be matched to such personal records as our immigration status, voter registration, financial information and social media posts.

The modern ability to store large quantities of data means that these tools can be used to track us not only prospectively but also retroactively, recreating our past in a way that surveillance agents could never do. And unlike our fingerprints, facial-recognition technology can tell governments or companies not only whether we have been at a particular crime scene but also, potentially, many other places we have been. As police departments succumb to the inevitable temptation to intrude more, an all-knowing Big Brother looms. Fear of this possibility, and the dangers of mistaken identification, were behind San Francisco's recent decision to bar police and other city agencies from using this technology.

In the United States, the Supreme Court has started to recognize the danger of public surveillance. In Carpenter v. United States (2018), the court held that "an individual maintains a legitimate expectation of privacy in the record of his physical movements as captured through" monitoring of a cellphone's location. Like other privacy rights, this right is conditional. If the government suspects wrongdoing, it can always seek judicial approval to monitor a particular person after demonstrating reasonable grounds to believe that he or she has committed or is planning a crime. But absent a judicial order, the government should not be allowed to produce a database of our movements.

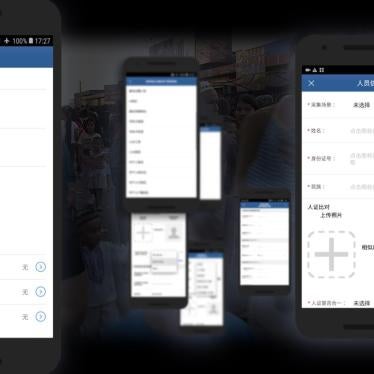

China's deployment of surveillance tools illustrates why we need a global right to privacy in public places. Surveillance cameras blanket the country, equipped with not only facial recognition capabilities but also the ability to recognize objects and license plates. Chinese police aspire to match that surveillance information with biometric data that they collect widely, including DNA and voice samples. The aim of this big-data analysis is to identify people deemed politically suspicious—a good illustration of how the right to privacy also implicates the right to freedom of expression. This dystopia is enabled by a complete lack of legal protections against state surveillance in China, not to mention the absence of an independent judiciary to enforce them or a free media to expose breaches.

These technologies are most visible in China's repression of Turkic Muslims in the Xinjiang region of western China, where the government is detaining an estimated 1 million and subjecting them to forced indoctrination. Human Rights Watch has exposed police use of a big-data system to monitor the region's 22 million residents, selecting people for interrogation based on criteria such as whether they use "suspicious network tools" like WhatsApp or "too much" electricity.

To make matters worse, the Chinese government is actively researching ways to use personal data for social control and behavioral engineering, including a "social credit system" that would reward and punish people based on what authorities consider good and bad behavior.

The Chinese government is not alone. Companies based in Israel, Germany, Italy, and Britain are developing these technologies and selling them to governments that use them to monitor and repress their citizens. Stronger global controls on the export and sale of these tools are urgently needed. More fundamentally, we must establish a clear right to privacy in public.

Kenneth Roth is executive director of Human Rights Watch. Before that, Roth was a federal prosecutor in New York and for the Iran-Contra investigation in Washington.