As the coordinator of the Campaign to Stop Killer Robots since its inception five years ago, I’ve been working to preemptively ban fully autonomous weapons.

As you might imagine, so much has changed since 2013. Such major military powers as the United States, China, Israel, Russia, South Korea and the United Kingdom continue to sink funds and resources into the development of weapons systems with ever-decreasing levels of human control over the critical functions of selecting and attacking targets.

More states have acquired precursor weapons systems, notably armed remote-controlled drones.

Concurrent to these investments is a growing international consensus that new international law is urgently needed to address this unrestrained trend towards autonomous warfare. The goal of creating a new ban treaty has been endorsed by 22 countries, dozens of Nobel Peace Laureates and faith leaders, more than 60 non-governmental organizations, and by thousands of artificial intelligence experts, roboticists and scientists.

The issue is simple: Fully autonomous weapons will cross a moral line that should never be crossed by permitting machines to make the determination to take a human life on the battlefield or in policing, border control and other circumstances.

Qualities such as compassion and empathy in addition to human experience make humans uniquely qualified to make the moral decision to apply force in particular situations. No technological improvements can solve the fundamental challenge to humanity that will come from delegating a life-and-death decision to a machine.

Any killing orchestrated by a fully autonomous weapon is arguably inherently wrong since machines are unable to exercise human judgement and compassion.

The question of what to do about killer robots has been hotly debated and this issue continues to seize the world’s attention. The campaign was pleased to raise its concerns at a public event on artificial intelligence convened by the organizers of the Munich Conference in mid-February, where several delegates made tentative statements of support for the call to ban such weapons. This panel on AI and conflict marked a unique opportunity for the campaign highlight multiple ethical, legal, operational, moral, proliferation, technical and other issues.

The head of Germany’s cyber command, Lieutenant General Ludwig Leinhos, told the conference’s opening event on AI and conflict: “We have a very clear position. We have no intention of procuring” fully autonomous weapons.

Some have described this comment as a new official German position in support of banning such fully autonomous weapons. Others claim that Australia, Canada, and the United Kingdom are similarly wholeheartedly behind the ban call.

But statements about an intention not to acquire such weapons are not the same as committing to and actively working toward a legally binding preemptive ban. And it is unclear what Germany or the UK or some others mean by fully autonomous weapons systems, particularly the nature of human control they see as required.

Any statements renouncing these weapons systems are, of course, a step in the right direction. It shows that the discourse and the debate within the armed forces of various countries is increasingly focusing not only questions of the potential legality of fully autonomous weapons, but on the much bigger ethical, proliferation, and other issues.

Yet statements alone are insufficient to deal with all the concerns raised about this far-reaching move toward greater autonomy in weapons systems.

Similarly, non-binding measures such as codes of conduct and greater transparency by programmers might sound appealing, but are no replacement for putting in place normative measures in the form of new law.

Binding legislation is required through a new international treaty and national laws to require the retention of meaningful human control over future weapons systems and individual attacks. Reaching this goal means countries must determine where to draw the line to preemptively ban development, production, and use of fully autonomous weapons.

During another Munich discussion, Eric Schmidt of Google was asked for his views on the call to ban fully autonomous weapons. He responded that “these technologies have serious errors in them and should not be used in life decisions.” He said there are “too many errors” and they shouldn’t be “put in charge of command and control.”

Estonia’s President Kersti Kaljula did not to directly comment on the concerns raised by fully autonomous weapons during our event, but subsequently told another Munich panel that countries at the United Nations General Assembly should vote on “banning artificial intelligence for military purposes.”

Such proposals have merit and could spur more urgent and concrete action. They could help focus greater attention on the ongoing diplomatic process at the Convention on Conventional Weapons, where some 90 countries are considering what to do about these weapons systems. The campaign has criticized the CCW talks for aiming too low and going to slow, but with political will, rapid progress is possible.

Another participant in the conference’s opening event on AI and conflict was former NATO Secretary-General Anders Fogh Rasmussen, who succinctly honed in on the challenge. He predicted that without a legal prohibition these weapons could create instability and warned: “Soon, you may see swarms of robots attacking a country …The robots can be easily deployed, they don’t get tired, they don’t get bored.”

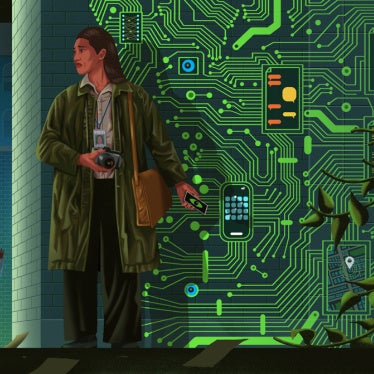

Our campaign understands the need to use striking visuals to communicate this challenge. Upon launching the coalition in 2013, we deployed a remote-controlled “robot” outside the British Houses of Parliament that has featured in coverage of this topic in the years since.

Yet we have been careful not to depict fully autonomous weapons in humanoid form to avoid confusing science fiction with actual trends and dangers of anthromorphizing this concern.

It was therefore with some reluctance that our coordinator shared the stage in Munich with “Sophia the robot.”

Created by David Hansen, who previously worked for Disney animatronics, Sophia was in the news last year after addressing an “AI for Good” summit at the United Nations and after it was controversially granted citizenship by the Saudi Arabia.

Sophia opened the AI panel event and gave scripted answers to predetermined questions. Some of the responses were borderline racist (asking why the audience of mainly youth were not wearing “lederhosen”) and sexist (scolded by the moderator for “flirting” after saying “nice uniform” to the general). Most were vacuous — “hurting someone is not OK unless you have to defend yourself” and “robots could potentially protect humans from each other.”

And some were just plain misleading. Upon inviting the Campaign to Stop Killer Robots coordinator to the stage, Sophia remarked, “Please let me assure you that I am not a killer robot.” The campaign has never claimed that Sophie was anything.

Show robots may have their place and can certainly attract media coverage, but Sophie was created with deception in mind, to give the impression of “intelligence.” Some with less experience of robots may see this machine as more sophisticated than what it is.

Similarly, there is an increasing tendency to hype the state of developments in artificial intelligence in particular states — China, Russia, US — as an arms race or some other kind of deadly competition. This could adversely influence not just those countries’ policy decisions about autonomous weapons but also their ability to comply with international law.

Why not focus, instead, on an imminent concerns raised by AI — namely, the complex, challenging, but achievable task of regulating autonomy in weapons systems?