Fueled by access to large data sets and powerful computers, machine learning and artificial intelligence can offer significant benefits to society. At the same time, left unchecked, these rapidly expanding technologies can pose serious risks to human rights by, for example, replicating biases, hindering due process and undermining the laws of war.

To address these concerns, Human Rights Watch and a coalition of rights and technology groups recently joined in a landmark statement on human rights standards for machine learning.

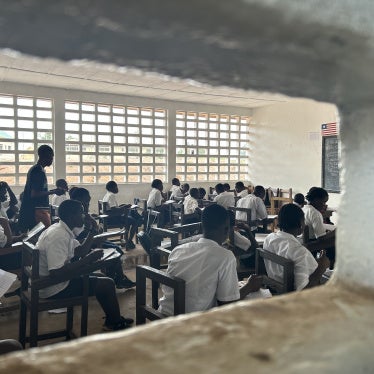

Known as the Toronto Declaration, the statement calls on governments and companies to ensure that machine learning applications respect the principles of equality and non-discrimination. The document articulates the human rights norms that the public and private sector should meet to ensure that algorithms used in a wide array of fields – from policing and criminal justice to employment and education – are applied equally and fairly, and that those who believe their rights have been violated have a meaningful avenue to redress.

While there has been a robust dialogue on ethics and artificial intelligence, the Declaration emphasizes the centrality and applicability of human rights law, which is designed to protect rights and provide remedies where human beings are harmed.

The Declaration focuses on machine learning and the rights to equality and non-discrimination, but many of the principles apply to other artificial intelligence systems. In addition, machine learning and artificial intelligence both impact a broad array of human rights, including the right to privacy, freedom of expression, participation in cultural life, the right to remedy, and the right to life. More work is needed to ensure that all human rights are protected as artificial intelligence increasingly touches nearly all aspects of modern life.