What are our concerns about someone owning Twitter who has denounced companies having a role in moderating content?

Let’s take a step back. First of all, it’s important to consider the implications of a single individual purchasing and exercising control over a company like Twitter.

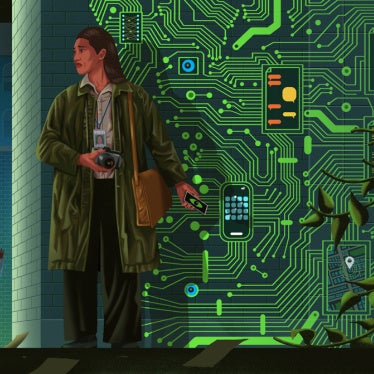

That’s because Twitter just isn’t any company. In the past decade, it’s become the space where we read about the news, where social movements like #MeToo or #BlackLivesMatter form and organize, where journalists and politicians get a sense of what the mood is like and what debates are. This is true globally, but it is especially the case in contexts where there is limited press freedom. Decisions made at Twitter about what can be posted, and what content is amplified and how it’s amplified, have far-reaching consequences for human rights and for societies around the world.

What are Twitter’s or any social media company’s human rights responsibilities?

Companies have a responsibility to respect human rights and address and remedy abuses they cause or contribute to under the United Nations Guiding Principles on Business and Human Rights. They should do this in a transparent and accountable way, enforcing their actions in a consistent manner.

What does that mean in practice?

For example, we recently analyzed how platforms, including Twitter, have responded to the war in Ukraine. Especially at the beginning of the invasion in February, platforms seemed to be making quite far-reaching content decisions on the fly. For example, in late March, Meta, the parent company to Facebook and Instagram, changed its policies on Ukraine content daily. The New York Times reported that this caused confusion for content moderators tasked with implementing these decisions.

We still don’t know how many content moderators employed by major platforms are fluent in Ukrainian. We also don’t know if they are able to assess content in ways that are unbiased and truly independent. And because independent researchers lack access to platforms’ data, we often can’t assess whether interventions and policy changes had their intended effect.

How else are they falling short?

For years organizations like Amnesty International have tracked the disturbing persistence of hate speech on Twitter – especially violent and abusive speech against women, including lesbian, bisexual, and transgender women.

I think this is something I really want to emphasize: the risks and harms that come from underinvesting in content moderation disproportionately affect people who are marginalized. We’ve also seen how social media platforms can become a place where abuse against people marginalized based on ethnicity or religion can escalate.

In this debate about platform accountability, Elon Musk calls himself a “free speech absolutist.” As a human rights organization, we advocate for the right to freedom of expression, but it’s not an absolute right. Treating it as one has real consequences.

What does that mean, “not an absolute right”?

Freedom of expression can be subject to restrictions or boundaries, including to ensure that speech does not infringe on other people’s rights. For example, if you’re inciting violence, governments may need to restrict your speech to keep people safe.

Also, companies are not governments, and they have more leeway than governments do to shape and impose limits on speech on their platforms to ensure that they’re not contributing to other human rights harms. In fact, they have to set boundaries and restrictions on speech in order to meet their international human rights responsibilities.

For example, online violence and abuse is disproportionately targeted at women or people of color, which drives people off platforms. This harassment inhibits their ability to use social media and participate in the global public sphere. Let’s also keep in mind that platform design decisions – from the recommender algorithms that are used, to decisions about how content can be shared and amplified–always, inevitably shape what can and cannot be said – and what gets attention.

An absolutist view on freedom of expression doesn’t wrestle with these complex challenges – to the detriment of those on the receiving end of harmful speech.

So how should platforms be moderating content?

Removing content affects a whole range of rights. It can also easily lead to censorship. But people also use platforms to form movements or run their businesses. As a result, removing content or accounts also affects other rights, such as the right to association, or economic rights. That’s why it’s important that platforms have strong systems for addressing human rights issues.

When it comes to companies, the Santa Clara principles on content moderation provide a good framework. These have been endorsed, although not implemented by some tech companies.

What are the Santa Clara principles?

They’re a set of principles created by civil society groups to set standards for platforms’ content moderation practices informed by due process and human rights. For example, the principles state that companies should publish the number of posts removed and accounts suspended. The principles also spell out precisely what information is needed to make sure platforms provide truly meaningful transparency and accountability.

Also, whenever platforms do take down content, they should notify the user who published the content and give a reason. And you should be able to appeal this in the language or dialect you speak if you feel the company has wrongly taken down your content.

What should governments do?

Social media companies have become the de facto infrastructure of the global public sphere. The decisions they make have far-reaching consequences on people’s lives and rights. We can’t just blindly trust that they will do the right thing and regulate themselves.

What we need is more democratic oversight of powerful technology companies. This includes good regulation that is grounded in international human rights standards, but also research and investigations by watchdog organizations that closely monitor what platforms are doing. That’s one reason why Human Rights Watch launched the new Technology and Rights Division this year. We wanted to be more focused on investigating how powerful actors, including dominant platforms, use technology to change societies, and their impact on people’s rights.

And we still often lack the most basic information to be able to hold tech companies and their owners accountable. It starts with the way they’re collecting data and it continues to more complex issues, such as how Artificial Intelligence systems work. We also investigate how governments and other powerful actors use and misuse technology.

The European Union this week agreed on a law to regulate platforms. What does this mean for Twitter and Musk?

The EU agreement is called the Digital Services Act package. This legal framework is designed to regulate internet platforms, including how platforms recommend content, how they target advertising, and how they take down illegal content. The package also includes the Digital Markets Act, designed to limit the market power of big online platforms. In general, we think this is very positive, although we also raised concerns about some aspects of the draft version law. We still haven’t seen the final text so it’s difficult to comment on.

Quite a few EU officials expressed a real sense of relief that Twitter will soon need to comply with rules regardless of who is in charge.

It’s important to stress that not all laws regulating platforms are positive. In fact, we’ve seen many very concerning laws with vague or overly broad definitions of illegal content, or limited judicial oversight, which can and in some cases have led to censorship. There’s often a fine line between fighting harm and silencing dissent.

Anything else that you’d like to add?

We’re finding ourselves at a pivotal time in history. We’re still in a global pandemic. We’re in a climate crisis. There are multiple wars and conflicts happening around the word. That’s why we absolutely need to make sure that the technological infrastructure we depend on to access critical information, to form social movements, and to organize supports – as opposed to undermines – our rights.

This is why it’s so important that we get this right.

*This interview has been edited and condensed.