Tell me about the IJOP and when we first learned about it?

The IJOP is a system of systems. It gathers information from, but not limited to, gas stations, checkpoints on the street, and access-controlled areas such as communities and schools. It pulls information from these facilities, as well as CCTV cameras, integrates them, and monitors them for “unusual” activity or behavior that triggers alerts that authorities then investigate. We reported on the IJOP system in February 2018 by pulling together procurement documents. At the time, we found an app that government officials and police officers in Xinjiang use to communicate with the IJOP system. We reverse engineered the app and uncovered the kinds of behaviors and people this mass surveillance system targets, which gives us a window into the inner workings of one of the world’s most intrusive mass surveillance systems.

How does the app work?

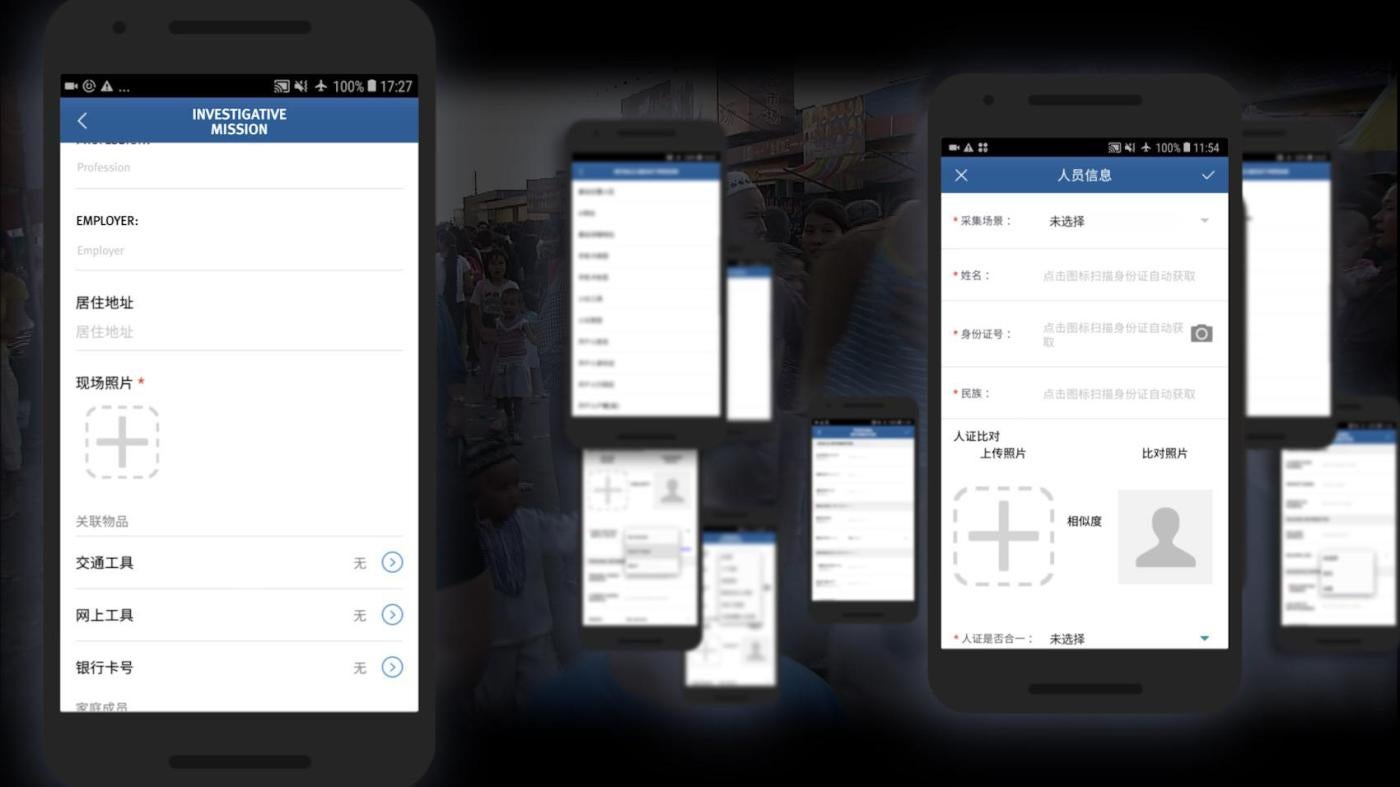

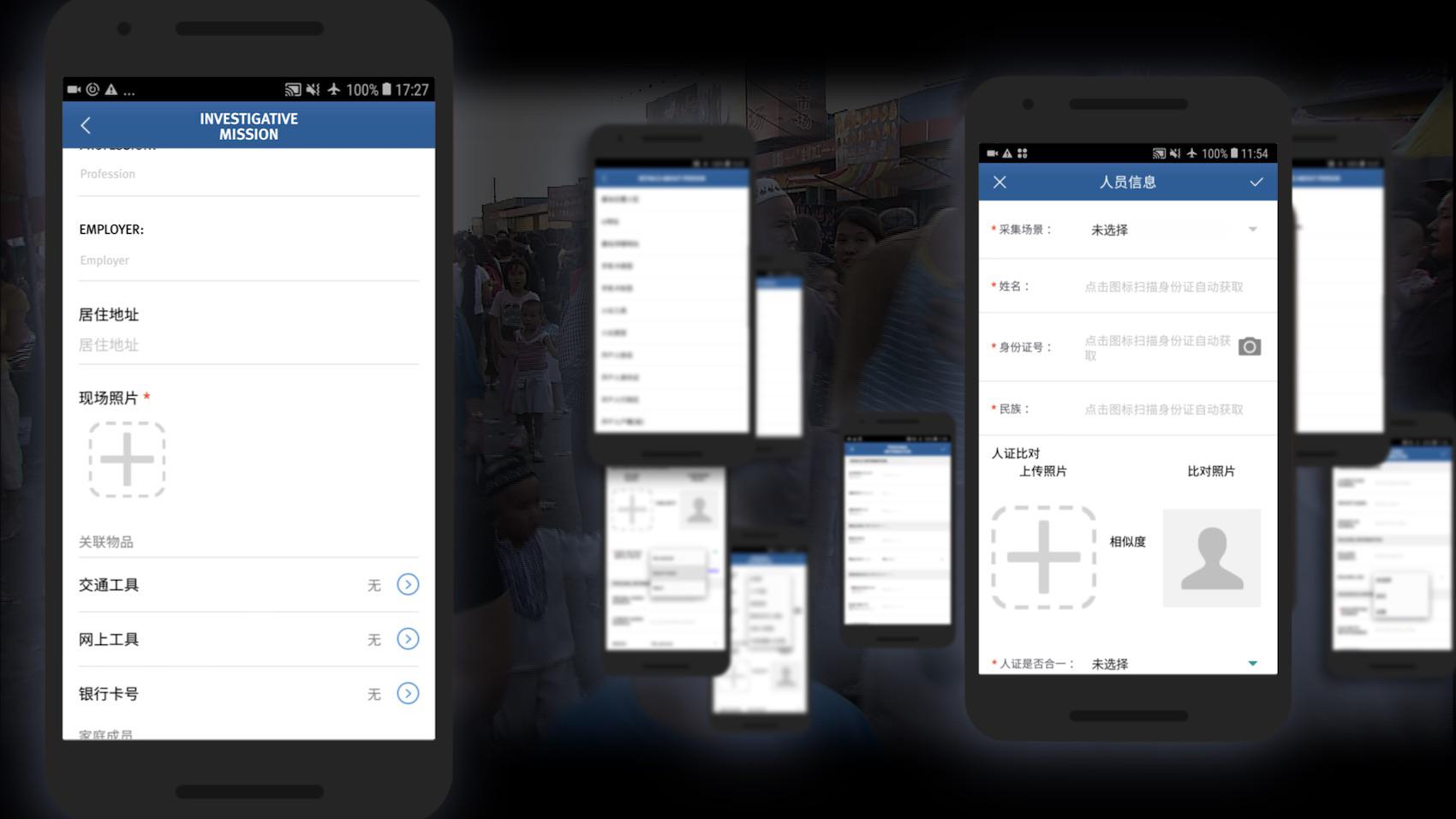

The app provides three broad functions: it collects data, reports on suspicious activities or circumstances, and prompts investigative missions. When it finds someone or something the app considers suspicious, the app sends an alert to a nearby government official to find out more. The official is supposed to provide feedback and note whether the person requires further investigation.

In some cases, the app also prompts the official to conduct a phone search for software, network tools, or content that is problematic. Do they have any suspicious software, such as WhatsApp? Have they visited websites that contain “violent audio-visual content”? Do they use any VPNs, encrypting data to prevent the authorities from intercepting and blocking it? Officials log the result of the phone search in the IJOP app.

How did you find the app and determine its authenticity?

The app was publicly available when we were searching for information about the IJOP, so we downloaded it. According to the app’s source code, the first version was released in December 2016.

Every app has a digital certificate that functions as the signature of the developer. The certificate showed that the app was developed by the company Hebei Far East Communication System Engineering Company (HBFEC). We found another app officially released by HBFEC on a Chinese government website, and when we compared the two, the digital signatures matched. HBFEC was – when the IJOP was deployed – a fully owned subsidiary of China Electronics Technology Group Corporation (CETC), a major state-owned military contractor in China. We also know, based on procurement documents, that CETC is the developer of IJOP. So, we were able to verify that the app is developed by HBFEC and used in Xinjiang.

How did you reverse engineer the app?

When I found the app, I sent a note to our director of information security, who has experience looking at apps in the context of surveillance. He sent me a number of files with the “text strings” showing Chinese phrases, but it was really difficult to know how they relate to each other because they were out of context. I saw text in Chinese about “religious atmosphere” and “package delivery” and “phone software,” and something about “suspiciousness” and “arrest,” but that’s all I could make out.

Technically, we are doing something we’ve never done before at Human Rights Watch, and there really was no body of work we could follow. When we completed an initial examination of the app’s content, we started working with Cure53, a Berlin-based security company. I worked with them for a very intense period. We decided to do a passive investigation, which means we did not attempt to connect to the server or log in to the app.

We put our very distinct sets of expertise together. There were a lot of things they couldn’t decipher because they couldn’t read Chinese, and things I couldn’t decipher because I couldn’t read code. The source code may give you a phrase in Chinese, but you need to know the context in Xinjiang to know what it means. And some of those terms we had not encountered before.

Can you give me an example?

The app goes through 36 “person types” that are deemed suspicious. Some of them are fairly straightforward, but others aren’t. For example, there was a reference to the “followers of Six Lines.” We asked one of our key informants in Xinjiang, who said that these lines refer to religious scholars who are determined by the authorities to be threatening.

There was another term, 野阿吉. It turns out that 阿吉 is Mandarin for Hajj, the Muslim pilgrimage to Mecca. So 野阿吉 refers to people who went on Hajj without authorization, or “unofficial Hajj.” And I know from interviewing people and looking at official documents that the Chinese government prohibits Hajj for Muslims unless it is organized by the state. So anyone who has gone on “unofficial Hajj” is a suspicious “person type.”

Can you explain how information about electricity usage and package delivery is fed into the app?

All we know is that the IJOP receives information about gas usage, electricity usage, and package delivery. Presumably, there is a system tracking this information and feeding it to the IJOP system, which draws on some of that data in populating the app.

Many mass surveillance practices described in the report do not appear to be authorized by Chinese law. In fact, they seem to violate it. Chinese law does not generally empower the police to monitor electricity usage. No Chinese law or regulation specifies the length of time people are allowed to stay abroad, or prohibits extended stays.

The app even includes information about whether someone is using the front door or back door of their home. How does the IJOP system collect that information?

The Xinjiang authorities have dispatched more than 1 million government officials into the Xinjiang region. There are officials who stay in the homes of Turkic Muslims through the “Becoming Family” program, and government officials are dispatched to notice if things are “unusual.” Did the family say something religious? Did they exit through the front door or the back door? (Going through the back door is presumably more suspicious.) We know the IJOP is connected to CCTV cameras enabled with facial recognition and night vision. It is also gathering a wealth of information from the numerous checkpoints throughout the region. Still, there is some information logged in the IJOP app whose sources aren’t clear to us.

Was there a particular revelation that surprised you?

The general intrusiveness of the mass surveillance system, the peaceful behavior for which the authorities are targeting people – those are problems I’m familiar with, so honestly that was unsurprising. What was really surprising is how much data there was about who exactly is targeted, what kinds of behaviors are suspect, which 51 network tools are considered suspicious, which categories of people are subjected to heightened surveillance – all of that is very surprising. The Chinese government, while controlling people’s movements, is also controlling access to the region. It’s trying to say, “Everything is going well in Xinjiang, there are no abuses here, it’s just all misinterpretation.” The app, however, provides proof, hard-coded in the app, and that’s difficult to refute. Reverse engineering the IJOP app is like having obtained a police manual, and having that information is incredible considering it’s a highly controlled region in China.

The IJOP also provides a lot of details about what we had suspected but didn’t fully understand. For example, we knew that there are the checkpoints, but now we know that some checkpoints are equipped with “data doors,” which covertly collect information from people, such as IMEI numbers, which are unique identifiers assigned to devices, and log this data for identification and tracking purposes.

What is the purpose of this surveillance?

The Chinese government is putting in place systems in Xinjiang, and throughout China, with the evident aim of total social control. The mass surveillance system presents the possibility of real-time, all-encompassing surveillance. Chinese authorities are collecting an oceanic amount of data about people to develop a fine-grained understanding of human behavior, and then controlling that behavior. Political education is part of that effort to re-engineer people’s behavior. But I also think it’s important to note that this system, though intrusive, is also crude and labor-intensive. I don’t think they are very sophisticated, and they require a huge number of police and resources to operate.

How has the Chinese government responded?

Initially, the Chinese government had denied that the detention camps existed, but then Xinjiang exploded in the news in the summer of 2018. The government shifted its position and said the camps were vocational training centers and that people were there voluntarily. It even invited government officials in friendly Muslim-majority countries including Kazakhstan and Pakistan to visit the facilities under state-managed, orchestrated tours. The Chinese government has moved from flat denial to defending its position because of the reporting.

What can other governments do?

Concerned governments need to think seriously about export controls and targeted sanctions, such as the US Global Magnitsky Act, including visa bans and asset freezes, against senior Chinese officials linked to abuses in Xinjiang. They should set the bar higher on privacy protections so that companies like the one that produced this app don’t succeed in setting the standards.